The transition from conversational AI to autonomous agentic systems is the defining technological shift in 2026. We have moved decisively past the era of monolithic "God Models" that simply respond to text prompts. Today, the industry is architecting agentic workflows - systems capable of autonomous perception, multi-step reasoning, tool execution, and self-correction over extended time horizons.

However, the methods by which these agents operate have aggressively forked. Developers, researchers, and enterprises are actively navigating five distinct architectural methodologies to achieve autonomy. The choice of architecture dictates not only the technical infrastructure required but also the fundamental ceiling of a system’s reliability and economic potential.

Below is a deeply technical breakdown of the defining AI agent architectures in 2026, their pros and cons, and a conclusive analysis of which methodology is positioned to capture the vast majority of the multi-trillion-dollar economic value in the coming years.

1. General Computer Use (GCU) & Browser / OS Takeover

General Computer Use (GCU) represents the "human-mimetic" path to automation. Spearheaded by frameworks like OpenClaw and models such as Claude Co-worker, these agents do not rely on structured backend APIs. Instead, they interact with the computer exactly as a human does: by looking at the screen, moving a virtual cursor, typing on a virtual keyboard, and navigating graphical user interfaces (GUIs).

Architectural Methodology

GCU relies heavily on Vision-Language-Action (VLA) models. These systems ingest desktop screenshots as visual tokens, parsing the screen via pixel-level Optical Character Recognition (OCR) and interpreting the underlying Document Object Model (DOM) or OS accessibility trees. When a user requests an agent to "pull the Q3 revenue from our legacy accounting software," the agent visually identifies the application icon, executes a double-click coordinate, visually scans the resulting window for the search bar, and types the query.

Pros

- Universal Compatibility: GCU is the ultimate "black box" solver. If a human can do it on a screen, the agent can theoretically automate it. It requires zero API keys, no backend integration, and seamlessly operates across legacy software, Citrix environments, and uncooperative web portals.

- Low Barrier to Entry: It requires almost no traditional software engineering to deploy. The interface is simply natural language mapping to direct desktop actions.

Cons

- Extreme Brittleness: GCU is highly susceptible to visual noise. If an application updates its CSS, a button shifts five pixels to the left, or an unexpected modal pop-up appears, the agent’s coordinate mapping breaks, leading to "hallucinated clicks."

- High Latency and Token Burn: Processing high-resolution images for every single step of a workflow requires massive token consumption and introduces high latency (often multiple seconds per action), making it unviable for high-speed, high-volume data processing.

- Security and Governance: Giving an autonomous system unrestricted access to a mouse and keyboard on an enterprise machine is an auditing and compliance nightmare.

This space is currently dominated by massive foundation model providers building native vision-to-action capabilities, alongside no-code startups making GUI automation accessible to non-technical users.

Company / Framework Comparison

2. Graph-Based API Orchestration

If GCU is the human-mimetic approach, Graph-Based API Orchestration is the systemic, "machine-native" approach. Frameworks like LangGraph have revolutionized this space by abandoning linear chains (which collapse under the weight of complex reasoning) in favor of stateful, cyclical graphs.

Architectural Methodology

In this architecture, workflows are modeled as graphs where nodes represent specific execution steps (like an LLM call or a Python script) and edges define the conditional control flow. State is maintained globally, often defined by strict schemas (like Pydantic models in Python).

When a payload enters the graph, an orchestration layer (often utilizing an in-memory data platform like Redis for sub-millisecond latency) passes the context state to specialized "router nodes." These routers do not generate conversational text; they output structured JSON to decide which API tool to call next. If a tool fails, the graph uses cyclic edges to loop back, allowing the agent to observe the error, self-correct its payload, and retry the API call autonomously.

Pros

- High Determinism and Reliability: Because agents interact through rigid, documented API contracts rather than visual GUI parsing, the execution is highly predictable and resilient to frontend software updates.

- Auditability: Every node traversal, state update, and API call is logged. Enterprises can mathematically prove exactly why an agent took a specific action, which is mandatory for compliance in sectors like finance and healthcare.

- Scale and Speed: API orchestration operates at network speed. An agent can pull data from Salesforce, cross-reference it with a Postgres database, and push an update to Jira in milliseconds.

Cons

- Steep Engineering Curve: Building resilient graphs requires traditional software engineering discipline—managing state schemas, handling API rate limits, and building persistent memory tiers.

- The "API Ceiling": These agents are entirely bottlenecked by the availability and quality of external APIs. If a target system lacks a programmatic endpoint, the graph cannot interact with it.

This is the "plumbing" of the enterprise AI world. These companies provide the infrastructure required to build stateful, cyclical, and highly deterministic workflows that interact with backend systems.

Company / Framework Comparison

3. Multi-Agent Systems (Swarm Intelligence)

The concept of a single, monolithic agent handling an entire workflow is largely obsolete in 2026. The industry has aggressively pivoted to Multi-Agent Systems (MAS) or "Swarm Architectures," championed by frameworks like CrewAI and Microsoft AutoGen.

Architectural Methodology

Instead of one massive prompt trying to solve a complex problem, MAS utilizes "Task Decomposition." A user request hits an Intent Router, which breaks the complex task into sub-tasks. These sub-tasks are delegated to highly specialized micro-agents, each given a narrow persona, specific tools, and a distinct system prompt.

For example, an autonomous software engineering swarm includes:

- A Planner Agent that drafts the architecture.

- A Coder Agent that writes the specific functions.

- A Critic/Verifier Agent that aggressively reviews the Coder’s output against the Planner's specs.

- An Executor Agent that runs the test suite.

These agents use hierarchical or concurrent communication protocols to debate, verify, and iterate on each other's work before presenting a final output.

Pros

- Massive Reduction in Hallucinations: Forcing agents to defend their logic to a "Critic Agent" creates a self-healing verification loop that dramatically improves output quality and logical coherence.

- Parallel Execution: In a concurrent architecture, an orchestrator can spin up 50 research agents simultaneously to scrape 50 different websites, drastically reducing time-to-completion for wide-scale tasks.

- Fault Isolation: If the Coder Agent fails, it doesn't crash the entire system. The orchestrator simply flags the error, passes the stack trace to a debugging agent, and keeps the rest of the workflow alive.

Cons

- Coordination Overhead: The "chatter" between agents requires robust message queuing and handoff protocols. Without explicit governance, agents can get stuck in infinite debate loops.

- Exponential Cost: Having five agents talk to each other to solve a single problem means token consumption scales exponentially.

The swarm ecosystem is rapidly maturing from experimental GitHub repositories into massive enterprise-grade platforms capable of coordinating dozens of specialized agents simultaneously.

Company / Framework Comparison

4. Edge & Local-First Agents (The "Thick Client" Revolution)

As the cloud computing costs for heavy agentic workflows skyrocketed, the market saw a fierce counter-movement toward Edge AI. In 2026, Small Language Models (SLMs)—typically under 10 billion parameters - have become the default engine for privacy-sensitive and latency-critical operations.

Architectural Methodology

Through advanced knowledge distillation, quantization (e.g., GGUF, AWQ formats), and highly optimized local hardware (NPUs, Apple Silicon), developers are deploying highly capable agents directly onto user devices. These "Sovereign Agents" run locally without ever calling OpenAI or Anthropic APIs. They maintain their own localized vector databases for semantic memory and execute tasks natively within the host machine's environment.

Pros

- Zero Marginal Cost: Once the model is downloaded, inference is entirely free. A local swarm can run 24/7 in the background without incurring API token bills.

- Absolute Privacy: Because data never leaves the device, local agents bypass nearly all enterprise data compliance hurdles (e.g., HIPAA, GDPR, SOC2).

- Zero-Latency Interactions: Stripping away network latency allows for real-time, voice-to-voice agent interactions that feel instantaneous.

Cons

- Hardware Constrained: The intelligence of the agent is strictly bounded by the VRAM and compute power of the host device.

- Lower IQ for Complex Tasks: While SLMs excel at routing, summarization, and basic coding, they still fall short of frontier cloud models (like GPT-5 or Claude 4 Opus) when it comes to deep, multi-step logical reasoning.

Driven by privacy concerns, cloud costs, and the need for zero-latency interactions, this market focuses on highly compressed, fiercely capable Small Language Models (SLMs) and the hardware to run them natively.

Company / Framework Comparison

5. Domain-Specific "Vertical" Agents

Generic AI assistants are rapidly being replaced by hyper-specialized "Digital Employees." A generic agent that can write both a poem and Python code is a neat trick, but enterprise value demands agents that possess deep, unshakeable expertise in a single, narrow vertical.

Architectural Methodology

Domain-Specific Language Models (DSLMs) are built by heavily fine-tuning foundational models on proprietary, highly technical datasets. Furthermore, they are deeply integrated with industry-specific Retrieval-Augmented Generation (RAG) pipelines. For example, an "Autonomous Legal Discovery Agent" is not just prompted to act like a lawyer; it is hard-wired into a vector database containing a specific firm's entire 50-year history of case law, deposition transcripts, and contract templates.

Pros

- Unmatched Domain Accuracy: Vertical agents understand the nuanced jargon, regulatory constraints, and standard operating procedures of their specific industry far better than any generalized model.

- High Commercial Moats: Startups building these agents inherently build massive data moats. A generic AI competitor cannot easily replicate a medical billing agent trained on millions of proprietary, HIPAA-compliant patient records.

Cons

- Massive Upfront Investment: Curating the pristine datasets required for fine-tuning and building complex, domain-specific evaluation frameworks is highly capital and labor-intensive.

- Lack of Adaptability: A world-class financial auditing agent is completely useless if asked to schedule a calendar appointment or write a marketing email.

These companies have abandoned the race for Artificial General Intelligence (AGI) to build highly lucrative, deeply specialized "Digital Employees" with massive, proprietary data moats.

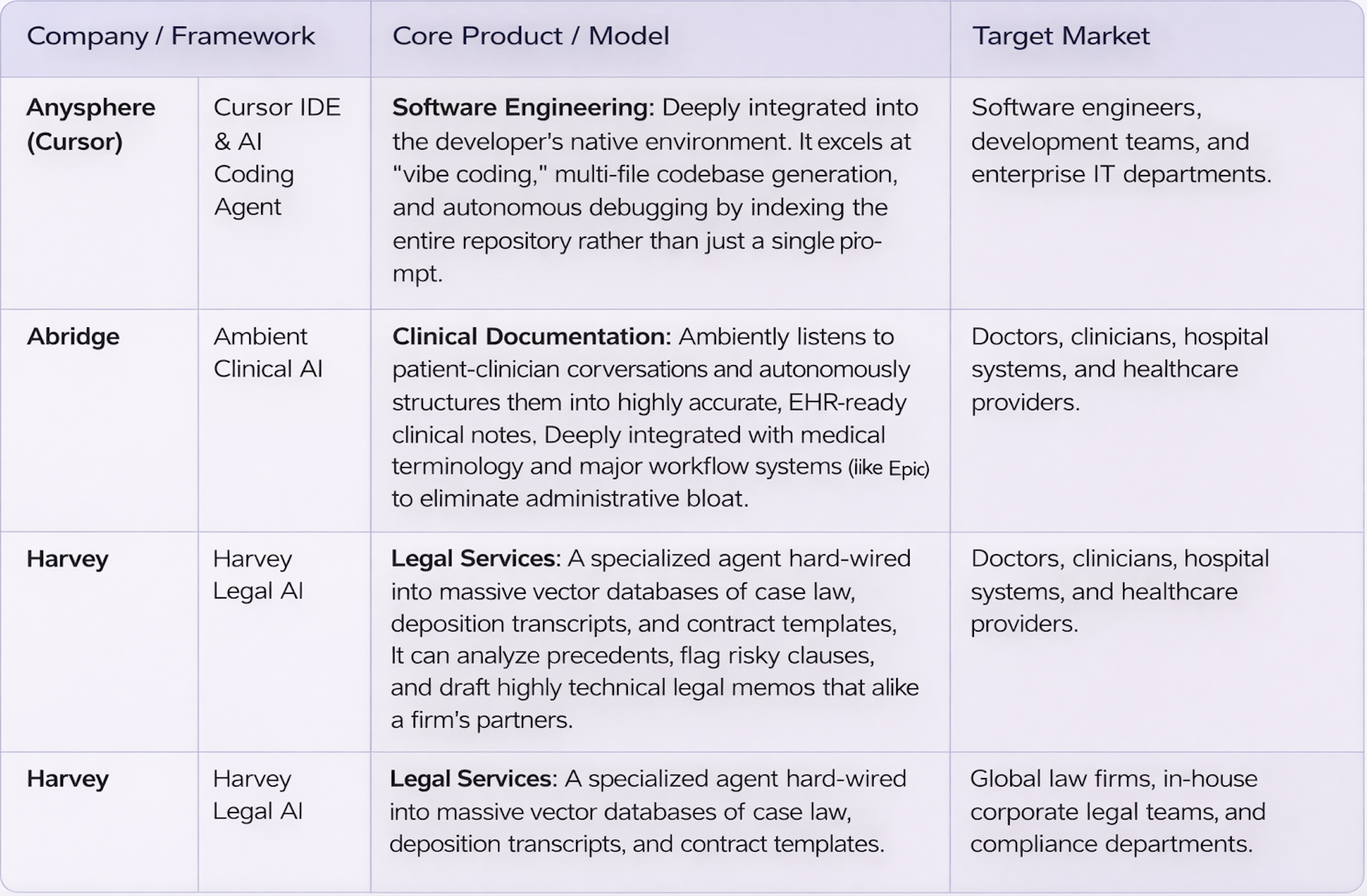

Company / Framework Comparison

The Economic Verdict: Where is the Trillion-Dollar Value?

While General Computer Use captures the public imagination—the idea of a bot moving your mouse to book a flight feels like science fiction realized—it is fundamentally a consumer-grade convenience.

When we analyze where the true economic value is concentrating over the next 5 to 10 years, the unequivocal winner is the combination of Graph-Based API Orchestration driving Multi-Agent Swarms within enterprise environments.

Here is why this systemic approach will dominate the global economy:

1. The B2B Intermediation Wave:

Gartner forecasts that by 2028, over $15 Trillion of B2B spend will be intermediated by AI agents. This level of commerce requires absolute security, instant transactional speed, and zero margin for error. You cannot run a global supply chain or process millions in financial trades using a VLA model clicking around a graphical interface. It requires agents communicating directly with other agents via secure, authenticated APIs.

2. Determinism is the Currency of the Enterprise:

Enterprises do not buy software for its novelty; they buy it for its reliability. Graph-based orchestration provides the "Agentic OS"—the necessary guardrails, audit trails, and state management required to deploy autonomous systems into mission-critical environments. If an agent hallucinates, a graph architecture allows engineers to pinpoint the exact node and token where the logic failed. GCU offers no such debuggability.

3. The Shift to Systemic Workflows:

We are moving from "AI as a feature" to "AI as the workforce." A multi-agent API swarm effectively digitizes entire departments. A company no longer deploys a single "marketing AI"; they deploy an orchestrator that manages a Research Agent, a Copywriter Agent, an SEO Agent, and an Analytics Agent—all interacting seamlessly with the company's headless CMS and CRM via APIs.

The companies that will generate the most wealth in the late 2020s are not those building the smartest foundational models, nor those building clever desktop macros. The winners will be the infrastructure providers—the companies building the complex, graph-based plumbing that allows swarms of specialized agents to securely route, reason, and execute against the world's APIs.